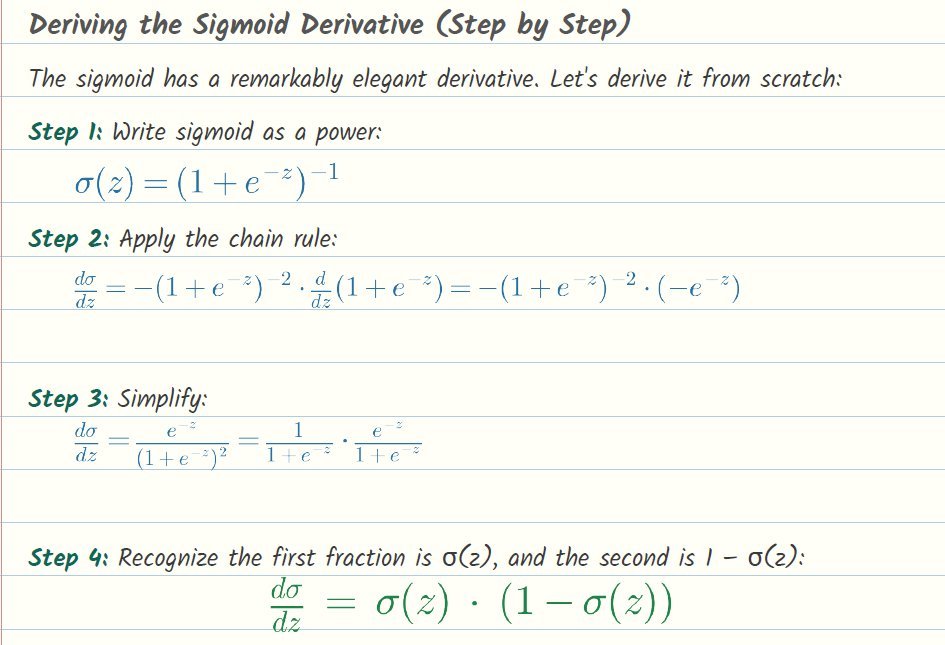

Logistic Regression — Full Derivation

The chapter that most people cite as the one that made it click. From MLE to the gradient update rule, every step shown — including the sigmoid derivative worked out four lines at a time.

Backpropagation — What's Really Happening

Backprop derived from the chain rule with one worked example on a small network. No “it can be shown that” anywhere.

The Bellman Equation, Intuitively

Why the Bellman equation is just “the value of now equals now plus the value of next.” Works out the gridworld case.

Gradient Descent — From Scratch

The definition of a derivative, the idea of going downhill, and the update rule. For anyone who's memorized gradient descent without understanding it.

Convolution, Really

What a convolution actually does to a pixel grid, why it's called that, and how it built into the first CNN.

Attention — One Head, Step by Step

A single attention head, worked out with small matrices you can follow by hand. No dot products hidden in the notation.